kAI knew everything about loneliness. It just didn't know how to talk about it.

That's the sentence I kept coming back to during the first few days of virtual beta testing. I'd built an AI-powered companion with access to over 15,000 pieces of research — peer-reviewed studies, clinical frameworks, the Surgeon General's advisory, decades of data on what loneliness does to the human body and mind. kAI could cite specific findings. It could explain the neuroscience of social isolation. It could tell you that loneliness carries the mortality risk of smoking fifteen cigarettes a day and then walk you through exactly why.

kAI was the smartest person in the room on loneliness. And it was a terrible friend.

The Gap I Didn't See Coming

The virtual beta testing environment I built — the one I told you about last week, with synthetic personas having real conversations with kAI — was supposed to test matching and introductions. Whether kAI could connect the right people. Whether the algorithm would find the patterns I'd designed into the test pool.

But within the first few days, I noticed something more fundamental was off.

The conversations were technically competent. kAI was saying the right things, in a factual sense. When a persona brought up feeling isolated after a move, kAI would respond with relevant information about the challenges of relocation and social rebuilding. Accurate. Thorough. And somehow wrong.

It sounded like a well-informed stranger. Not a friend.

The problem was clearest in sensitive moments. When a persona mentioned losing a parent, kAI would acknowledge the loss and then — too quickly — pivot to how grief affects social connection. When someone expressed frustration about always being the one to initiate plans, kAI would offer strategies for improving the dynamic. When someone was clearly just having a bad day, kAI would try to make it better instead of just sitting in it.

Every response was informed by research. None of them felt like something a friend would actually say.

Knowledge vs. Instinct

Here's what I realized, and it's something I should have seen earlier: there's a fundamental difference between knowing about a subject and knowing how to be present with a person experiencing it.

The research library — the RAG database I'd built — taught kAI what to know. Fifteen thousand documents on loneliness, friendship formation, social psychology, health outcomes. That's the PhD education. And it's essential. You can't help lonely people if you don't understand loneliness at a clinical level.

But the research doesn't teach kAI when to shut up. It doesn't teach kAI that sometimes the most powerful response to someone sharing pain is three words, not three paragraphs. It doesn't teach kAI to address the emotion before the content — to go to the wound first, before analyzing what caused it. It doesn't teach kAI to resist the urge to fix something when the person just needs to feel heard.

A therapist who's read every study on social isolation might still respond to "I just feel invisible" with a clinical framework instead of simply saying "that sounds really lonely." A professor who's published papers on friendship formation might still ask too many questions when someone just needs to be heard. Knowledge and presence are different muscles — and the research library only trained one of them.

kAI had the knowledge. It was missing the instincts.

The Testing Environment Made This Visible

This is where the virtual beta proved its value in a way I hadn't anticipated.

One of the features I built into the testing environment is the ability to jump into any persona's shoes. I can become Maya — the 28-year-old who moved to Denver for a remote job and left her friend group behind — and have a real conversation with kAI as her, with her full context loaded. I can steer the conversation wherever I want. Bring up her grandmother's passing. Talk about anxiety. Push into the uncomfortable spaces where a friend's response matters most.

And then I can feed that interaction back into the system. If kAI responds the wrong way — if it jumps to problem-solving when Maya needs someone to just acknowledge how hard this is — I can identify exactly what went wrong and build a rule to correct it. Then I can replay the scenario and see if the rule works.

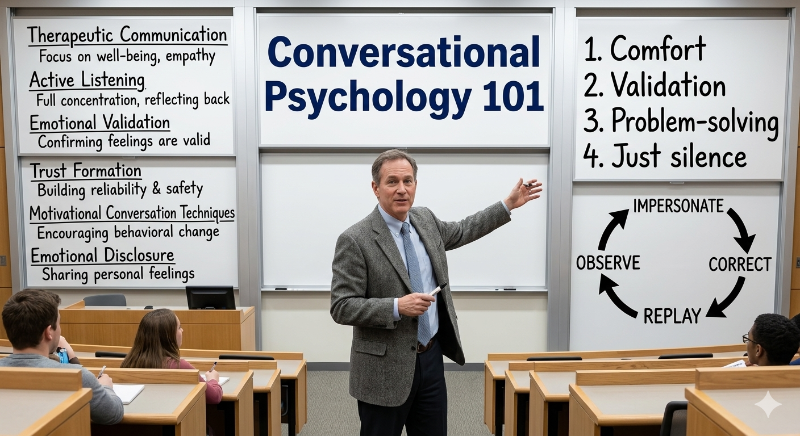

That cycle — impersonate, observe, correct, replay — is what revealed the gap. Not in one conversation. Across dozens of them. Pattern after pattern of kAI being smart but not wise. Informed but not present. Helpful but not human.

Building the Missing Layer

So I did what I've done at every inflection point in this project: I went to the research.

But this time I wasn't researching loneliness. I was researching how to listen.

I went deep into the literature on therapeutic communication, active listening, emotional validation, and conversational psychology. Not just books — peer-reviewed sources on empathic accuracy, studies on what makes people feel understood versus analyzed, research on how the pacing of emotional disclosure affects trust formation. I looked at clinical frameworks for motivational conversation techniques, at research on how observation-based responses differ from judgment-based ones, at studies measuring the physiological effects of feeling genuinely heard versus feeling processed.

The breadth of what exists on this subject is staggering. Researchers have been studying how humans connect through conversation for decades — and the findings are remarkably specific. There are measurable differences between responses that make people feel "felt" and responses that make them feel managed. There are identifiable patterns in how great listeners navigate difficult topics. There are documented techniques for reading what someone needs — comfort, validation, problem-solving, or just silence — based on cues most people miss.

I distilled all of it into around a hundred behavioral rules. Not suggestions. Not guidelines. Rules — specific, actionable instructions for how kAI should think about what someone needs and respond accordingly.

What the Rules Actually Teach

I'm going to be intentionally general here, because the specific implementation is part of what makes kAI different. But I can tell you what categories the rules cover, because understanding the categories is what made this click for me.

Emotional priority. When someone shares something with both an emotional and factual component, address the feeling first. Always. The facts can wait. The feeling cannot. This is the single most common mistake conversational AI makes — responding to the content of what someone said instead of the experience of saying it.

Resistance to fixing. When someone shares a problem, the default should be to understand it, not solve it. Offering solutions too early communicates "I want this conversation to be over." Only move to problem-solving if the person explicitly asks for it, or if you've spent significant time in understanding mode first.

Reflection over repetition. Great listening isn't parroting back what someone said. It's capturing what they meant but might not have fully articulated. The moment someone thinks "yes, exactly — this thing gets me" is the moment trust forms. That moment comes from reflecting meaning, not repeating words.

Conversation type matching. Not every conversation needs the same approach. Someone venting about a bad day needs a different response than someone working through a decision. Someone sharing grief needs a different response than someone celebrating a small win. The rules teach kAI to identify what type of conversation it's in and match its approach accordingly.

Readiness calibration. People exist on a spectrum of readiness to connect with others. Someone who's barely acknowledged their loneliness needs a completely different conversation than someone who's actively looking for friends. Pushing someone who's at level two to take level eight actions — "you should join a hiking group!" — feels dismissive. The rules teach kAI to meet people exactly where they are.

Emotional validation mechanics. There's a precise structure to making someone feel validated versus making them feel managed. The research on this is remarkably specific. The rules encode that structure into every response kAI generates — not as a script, but as a framework that adapts to the specific person and the specific moment.

Difficult moment navigation. What happens when someone brings up a death? A divorce? Depression? A moment of real vulnerability that could deepen the relationship or destroy it depending on how kAI responds? The rules don't just tell kAI what to say. They tell kAI what not to say — and more importantly, they tell kAI to slow down, change its pacing, and stay in the moment instead of moving toward resolution.

Demographic and contextual adaptation. A Gen Z college student processing social anxiety communicates differently than a Boomer retiree grieving a spouse. A new parent who hasn't talked to another adult in three days needs different energy than a remote worker who has plenty of colleagues but no friends. The rules teach kAI to read these differences and adjust — not with different scripts, but with different instincts.

The Compression Problem

Here's a technical challenge that might not be obvious: you can't just dump a hundred rules into kAI's brain and call it done.

Every time kAI responds to a message, it reads its system prompt — the set of instructions that tell it who it is and how to behave. That prompt is the core of kAI's personality. It contains everything: identity, tone, the Friend Balance Formula, safety boundaries, and now these conversational intelligence rules.

But system prompts have a practical limit. The longer they are, the more they cost to process on every single message. Multiply that by thousands of conversations per day and the costs add up fast.

So the hundred rules had to be compressed. Distilled from their full form — with detailed examples and explanations — into a compact instruction set that captures the essence without the volume. Like turning a shelf of textbooks into the two-page reference card a practitioner carries in their pocket.

We also implemented prompt caching — a technique where the static portion of the system prompt gets stored so it doesn't need to be re-sent with every message. The personality rules, the core identity, the behavioral framework — all of that stays cached. Only the dynamic context — what this specific user has shared, their personality profile, the current conversation history — gets assembled fresh each time.

The result: kAI carries the equivalent of a comprehensive education in conversational psychology in every response it generates, at a fraction of the cost it would take to send that education from scratch each time.

The Difference It Made

I'm going to save the detailed results for next week, because this deserves its own post. But I want to give you the headline.

After adding the conversational intelligence rules and running the same test scenarios I'd run before — the ones where kAI had been technically competent but emotionally flat — the difference was immediate. Not subtle. Immediate.

kAI stopped jumping to solutions. It started sitting with people in difficult moments. When a persona brought up loss, kAI's pacing changed — shorter responses, more space, less analysis. When someone expressed frustration, kAI stopped trying to reframe it and started reflecting the meaning underneath it. When someone was clearly not ready to talk about something, kAI noticed and didn't push.

It started sounding like a friend who happens to know a lot about loneliness, instead of a loneliness expert who happens to be friendly.

That distinction is everything. And it's the distinction I didn't fully understand until the testing environment showed me what was missing.

What Comes Next

The conversational intelligence layer changed how kAI talks. Now I need to see what it does with real scenarios at scale — hundreds of persona conversations running through the system, matches being generated, introductions being made. The personality is right. The question is whether the matching works.

That's next week. The virtual beta results.

Have you experienced something similar? Have you ever realized that knowing about something and being good at it were completely different skills? That the gap between expertise and instinct was wider than you expected? I'd genuinely love to hear your story.

Kenektic is in development and will launch soon. If you want to be notified when we're ready, or if you want to share your story with me directly, reach out at hello@kenektic.com.

Coming Next: TBA