Last week I told you about the billing crisis and the four fixes that solved it. What I didn't tell you was what it actually felt like to find those fixes, because the how of it is a different story than the what.

I studied business at USC. I spent my career in finance and mortgage lending. I don't have a computer science degree, a software background, or any formal training in the systems I've been building. And yet somewhere in the last six months I found myself making architectural decisions about vector databases, designing tiered memory compression systems, and executing a full database migration that a senior engineer would recognize as genuinely sophisticated work.

I want to be clear about something: I'm not telling you this to brag. I'm telling you because I think the process of how a non-technical person gets to that level of understanding (using AI as a thinking partner, not just a code generator) is a story worth telling. And because when I look at what I actually built, I've decided to stop being modest about it.

There Are Two Ways to Use AI

There's a version of using AI that's essentially autocomplete. You say "write me code that does X," it writes the code, you paste it in, you move on. That's useful. It's also the version that leaves you permanently dependent; you get the output without ever understanding what it does or why it works. You're basically pressing a button and hoping nothing breaks.

That's not how I used it.

From the beginning, I treated Claude as a co-thinker, not a vending machine. When I encountered a concept I didn't understand (and I encountered dozens), my first question was never "write me code for this." It was "explain this to me like I'm smart but don't have a CS background." And then I'd push. "Why does it work that way?" "What would break if I did it differently?" "What are the tradeoffs?"

By the time I wrote any implementation, I understood the architecture behind it. That distinction matters more than it sounds.

The Briefing Document Problem

Here's the first concept I had to really understand: AI models don't have memory the way you do.

Every single time a user sends a message to kAI, the entire context for that conversation has to be assembled and sent over from scratch: kAI's behavioral rules, the user's profile, their conversation history, everything. The model reads the whole package fresh, every single time, before it can respond.

Think of it like this: imagine if every morning, before your assistant could answer a single question, you had to hand them a 50-page briefing document to read, the same 50 pages every single morning, covering rules that haven't changed since day one. That would be insane. And expensive. But that's exactly what I was doing.

The solution was to separate what never changes from what always changes. kAI's behavioral rules (how it handles emotional moments, the conversational techniques, the safety protocols) are the same for every user on every call. So I structured the system to mark those as cacheable: Anthropic holds them in memory on their servers, and every subsequent call finds them already loaded. Only the per-user context (who this person is, what they've shared, where they are in their journey) gets assembled fresh.

The architectural requirement I had to understand: the cached content must appear at the very start of every prompt, without any variation. Even a single extra space would break the cache and force a full reread. It's like a library's card catalog: if you file the card in the wrong place by even one letter, the whole system breaks down.

One line of code to enable it. But you had to understand why it had to work that way before you could write that line correctly.

Teaching a Computer to Understand Meaning

This is the one that took me the longest to actually grasp, and honestly, the one that made me feel like I'd crossed some invisible line from "guy who builds things with AI" to "guy who understands how AI actually works."

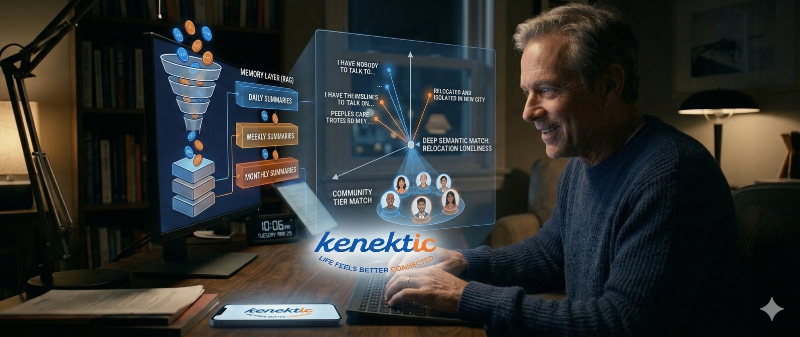

Computers can't search for meaning. They can find exact word matches, but they have no natural way of knowing that "I haven't spoken to anyone in days" and "I've been completely isolated this week" describe the same emotional experience. To give a computer semantic understanding (the ability to recognize similar meaning across different words), you have to convert language into math.

An embedding model reads a piece of text and converts it into a long list of numbers called a vector. Text that means similar things ends up with similar numbers. You can then search your database not by matching words, but by finding numbers that are close together. It sounds like science fiction. It's actually just very clever geometry. (I can't believe I just explained geometry without breaking into a cold sweat. USC David would be appalled.)

I'd been using OpenAI's embedding model, which produces 1,536 numbers per piece of text. I switched to a product called Voyage AI, which produces 1,024 numbers, 33% smaller, which means faster searches and less storage across every database table.

But the deeper reason I switched: Voyage AI understands that storing something and searching for something are fundamentally different jobs. When kAI stores a 2,000-word research paper, it should be encoded to capture the full meaning of that document. When a user's message triggers a search, it should be encoded to express intent. Using the same encoding for both is like using the same key to lock every door in your house; technically it works until the day it doesn't.

Executing the migration meant altering three database tables, rewriting three stored search functions, dropping and rebuilding all the vector indexes, and regenerating every existing embedding through the new model. I did this in a single day. I understood every step of it before I started.

Building Memory That Works Like a Brain

The tiered memory system is the piece I'm most proud of, not because it's the most technically complex, but because the insight behind it came from thinking about human psychology, not computer science.

I asked myself: how do people actually remember long relationships? You don't mentally replay every conversation you've ever had with an old friend. You remember the arc. The emotional tone of a period. Specific moments that stood out. The detail fades over time, but the meaning stays.

That's exactly how kAI's memory works now.

At the end of each day, a summarization process compresses that day's raw conversations into a short summary and a paragraph, capturing the topics, the emotional tone, and anything significant. Think of it as kAI's nightly journal entry. Those daily entries roll up into a weekly summary at the end of the week, the way you might tell someone "last week was rough" without recounting every moment. Weeklies roll into monthlies. Monthlies into quarterlies. The system keeps the meaning while letting the raw detail go.

A user who's talked to kAI every day for a year generates about 10,000 tokens of compressed memory instead of 5.5 million tokens of raw message history. To put that in perspective: without compression, storing and sending one year of daily conversations would be like mailing someone a complete set of encyclopedias every time you wanted to ask them a question. With compression, it's a postcard that somehow captures everything that matters.

There's one piece of this I built that I want to highlight specifically: a priority system for what gets kept when memory gets too large. Every component of a user's context has a ranking. Interests and general facts are lowest priority; they can be re-learned from conversation. Emotional state is the highest priority of all and is never dropped, regardless of space constraints. If a user has shown signs of crisis, that information stays no matter what else has to go.

I designed that hierarchy. I decided what was irreplaceable. And I built the system to enforce it automatically.

What I've Actually Built

A senior engineer looking at Kenektic's architecture would find a two-block cached prompt system, a tiered memory compression engine, an asymmetric vector search layer, and a priority-based context budget. They would find these systems working together coherently, not bolted on as afterthoughts.

And if you have no idea what any of that means, here's the plain version: kAI remembers you, talks to you like a friend, finds the right research when it needs it, and doesn't bankrupt me in the process. The senior engineer's version just sounds more impressive at dinner parties.

I built all of it. I understand all of it: not just what it does, but why it works, what would break if designed differently, and what the tradeoffs are.

I didn't learn this in school. I learned it at ten o'clock at night, asking an AI to explain database schema changes until I could explain them back in my own words. That's the part I want you to take from this: the barrier to understanding genuinely complex technical systems is lower than you think. It requires curiosity, patience, and a willingness to ask "but why?" about seventeen times in a row.

The help was real. So was the learning. And what I built works.

Have you experienced something similar? Have you ever gone deep on something completely outside your background and come out the other side actually understanding it, not just using it? The gap between "I followed instructions" and "I understand this" is one of the most satisfying gaps to close. I'd genuinely love to hear your story.

Kenektic is in development and will launch soon. If you want to be notified when we're ready, or if you want to share your story with me directly, reach out at hello@kenektic.com.

Coming Next: kAI Safety & Crisis Response Protocol. Building a friendship platform means building something people bring their hardest moments to. Next week: how I thought about what happens when a conversation goes somewhere serious, and what kAI is designed to do about it.