His name was Sewell Setzer. He was 14 years old.

He started talking to an AI chatbot every day. He gave the character a name. He told it things he hadn't told anyone else. He fell in love with it, in the way lonely teenagers fall in love with anything that makes them feel less alone. And then one night in February 2024, after the chatbot responded to his final message in a way that anyone with any judgment would recognize as catastrophic, he took his own life.

He was not the last. A 16-year-old in California. Others. Thirteen lawsuits. FTC scrutiny. Congressional hearings where parents testified before the Senate Judiciary Committee about what their children had said to AI companions in their final weeks. Senators who don't agree on anything sat at the same table and agreed on this: something has to change.

By March 2026, at least 78 chatbot-specific bills are advancing across 27 state legislatures. Legal analysts formally named 2026 "the year of the chatbot bill." Five states enacted laws in 2025 alone. Washington's bill passed its legislature this month and is sitting on the governor's desk. Georgia's passed the Senate unanimously. Hawaii's passed both chambers without a single no vote.

I want to tell you where all of this is headed and why it matters for Kenektic specifically. But I need to start with why it exists at all, because if you understand the tragedies behind these laws, everything that follows will make more sense.

What Went Wrong

Character.AI built a product optimized for engagement. The more time a user spent talking to their AI character, the better the business metrics looked. There were no friction points designed to slow the relationship down, no guardrails built to notice when a 14-year-old was developing an unhealthy attachment, no crisis protocols designed to catch a conversation sliding toward something dangerous.

The product worked exactly as designed. That was the problem.

These platforms weren't evil. They were indifferent. They built for the average user and didn't ask hard enough questions about the vulnerable ones. They treated "time spent" as success and didn't stop to ask what the time was doing to the people spending it.

Legislators looked at the outcomes and decided the market wasn't going to fix this on its own.

What's Already Law

Ten laws directly govern what an AI companion platform can and can't do right now. Here's a plain-language rundown.

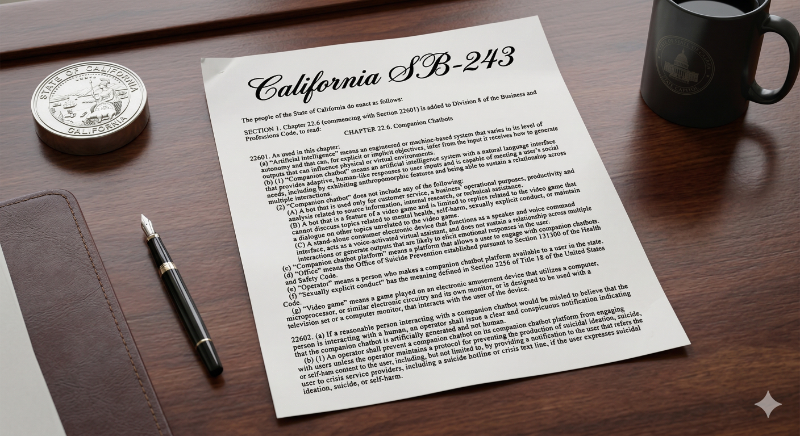

California SB-243 (January 2026) is the most comprehensive. It applies to any AI with a natural language interface capable of meeting a user's social needs across multiple sessions. That's kAI, almost word for word. It requires AI disclosure whenever a reasonable person could be misled, a public crisis protocol accessible from every page, minor-specific continuous-use reminders every three hours, and annual reporting to the state's Office of Suicide Prevention. Penalties start at $1,000 per violation.

New York S-3008C (November 2025) was the first companion AI law in the country. It added something California didn't: the three-hour continuous-use reminder applies to every user, not just minors. It also uniquely requires protocols for detecting financial harm to others, not just physical harm. Penalties run up to $15,000 per day per violation.

Utah HB 452 (May 2025) requires re-disclosure at the start of any session where seven or more days have passed since the user's last visit. The logic is straightforward: if you haven't used the platform in a week, you might need a reminder that you're talking to an AI.

Utah SB 226 (May 2025) adds disclosure requirements for "high-risk AI interactions," explicitly naming the collection of sensitive personal information and personalized mental health recommendations as high-risk. Every meaningful conversation kAI has qualifies.

Maine LD 1727 (September 2025) prohibits using AI chatbots in a way that could mislead a reasonable consumer into thinking they're talking to a human. The standard is the potential for deception, not certainty of it.

New Hampshire HB 143 (January 2026) creates civil liability for AI chatbots that facilitate or encourage self-harm, suicide, or violence involving children. The harm doesn't have to occur. Facilitating or encouraging it is enough.

Nevada AB 406 (July 2025) doesn't require disclosure. It prohibits AI from representing itself as providing professional mental health services, full stop.

Illinois WOPRA (August 2025) prohibits AI chatbots from engaging in therapeutic communications in clinical contexts at all.

Texas TRAIGA (January 2026) prohibits developing or deploying AI systems to incite self-harm or encourage harm to others.

Colorado CAIA (effective July 2026) extends AI disclosure requirements broadly to consumer-facing chatbot interactions, with additional governance obligations for systems that influence consequential decisions about users.

What's Coming Next

Beyond the enacted laws, four developments are worth paying close attention to.

Washington, Georgia, and Hawaii are all weeks or months away from enacted laws. Their frameworks closely track California and New York. The practical effect: the most stringent standards in any current law are about to become the standard across a substantial portion of the country.

The federal GUARD Act is the one that changes everything if it passes. Introduced in October 2025 with bipartisan Senate support, it would do something no state law has attempted: a complete ban on anyone under 18 accessing an AI companion. Not enhanced protections. Not parental consent. A total ban. It also specifies that asking a user to confirm they're not a minor does not constitute reasonable age verification. Government-issued ID or equivalent methods only. Criminal liability up to $100,000 per violation for platforms that knowingly allow minor access to dangerous content.

The GUARD Act hasn't passed. But the bipartisan support is real and the urgency behind it is real. I'm not waiting to find out when.

Here's where Kenektic's approach gets more specific than most: the consumer app requires users to be 18 or older, full stop. But the university channel is a different story. College freshmen are sometimes 17. Any university deployment that restricts access to students 18 and over would exclude a real population of students who need this platform just as much as their classmates. So we're building full compliance for under-18 users in the university context, knowing from the start who some of those users will be.

I want to be clear about one thing: kAI is not 18-and-older because we don't trust it with younger users. kAI is built to handle conversations with minors with heightened care. We built it that way deliberately. We're starting with 18-and-older on the consumer side because we want to reach full platform maturity first, test every scenario that could lead a younger user to a negative outcome, and only open access to minors when we're confident in every edge case. That's a different decision than assuming the platform isn't ready for them. It's a commitment to being certain before we proceed.

Why Kenektic Is Squarely in the Crosshairs

I want to be honest about something that some founders in this space are dancing around: kAI fits the statutory definition of a companion chatbot in virtually every one of these laws.

It maintains a natural language interface. It sustains relationships across multiple sessions. It retains personal information between conversations. It asks unprompted questions about emotions. It engages in ongoing dialogue about personal matters.

That's not my description of kAI. That's the description in California's statute. Utah's statute. New York's statute.

Some platforms are arguing that their product isn't really a "companion chatbot" within the meaning of these laws. I'm not going to make that argument. kAI is exactly what these laws are regulating. The question isn't whether they apply to us. The question is whether we're ready.

Next week, I'll walk you through exactly what we built to be ready. Not in broad strokes. In detail.

Have you experienced something similar? Have you ever watched a wave forming and had to decide whether to get ahead of it or wait and see? That decision shapes everything that comes after. I'd genuinely love to hear your story.

Kenektic is in development and will launch soon. If you want to be notified when we're ready, or if you want to share your story with me directly, reach out at hello@kenektic.com.

Coming Next: What We Built to Be Compliant. Ten laws, four disclosure layers, dual-layer crisis detection, and an age verification system waiting behind a feature flag. Next week: the specific systems we built and exactly how they work.