Last week I walked through the regulatory landscape: ten enacted laws, a federal bill with bipartisan momentum, and 78 more bills advancing across 27 states. If you haven't read that post, the short version is this: every law currently being written about AI companion chatbots describes kAI. Specifically. By name, in effect.

This week is the follow-through. What did we actually build?

On March 24, 2026, we deployed a comprehensive compliance framework. I want to walk through it in detail, because "we take compliance seriously" is something anyone can say. What follows is what it actually looks like when you mean it.

Four Layers of Disclosure

The core of most chatbot regulation is disclosure: making sure users know they're talking to an AI, not a human, at every meaningful point in the relationship. Different laws require different trigger points. We built all of them.

Layer 1: Onboarding Disclosure (Step 0). Before a new user can do anything else on the platform, before they set up their profile, before they say a single word to kAI, they see a disclosure modal. It explains: kAI is an AI companion, not a human. kAI cannot feel emotions. kAI does not provide medical, mental health, legal, or financial services. Companion chatbots may not be suitable for some minors. The user must acknowledge this before anything else happens. This is not a terms-of-service page that people scroll past. It's a full stop. Satisfies California SB-243, Utah HB 452, Utah SB 226, and Colorado CAIA.

Layer 2: Session-Start Banner. Every single time a user opens a kAI conversation, a dismissible banner appears at the top of the screen: "kAI is an AI companion: not a human and not a therapist. kAI is here to help you build real human connections." Not just on first use. Every use. Satisfies New York S-3008C and Maine LD 1727.

Layer 3: Three-Hour Continuous Use Reminder. After three hours of uninterrupted conversation, a reminder appears: "You've been chatting with kAI for a while. Remember, kAI is an AI companion, not a human. It might be a good time to take a break, stretch, or connect with someone in person." We run this for all users, not just minors. New York's law requires it for everyone. California only requires it for minors. We built to the more stringent standard. Satisfies both.

Layer 4: Seven-Day Return Re-Disclosure. If seven or more days have passed since a user's last session, they see a welcome-back modal before the conversation begins, reminding them that kAI is an AI. The reasoning behind Utah's law is sound: long gaps between sessions can blur the user's mental model of what they're talking to. We agree. Satisfies Utah HB 452.

Two-Layer Crisis Detection

This is the piece I take most seriously, and the piece I spent the most time on.

When someone brings a genuinely dark moment to kAI, the response matters more than anything else the platform does. Getting it wrong isn't a UX failure. It's a safety failure. We built two independent systems to make sure we don't.

Layer 1: Pattern Matching. A rules-based system runs on every message, scanning for language patterns that indicate suicidal ideation, self-harm, or expressions of crisis. It's designed with careful exclusion logic: past-tense references ("I used to feel that way"), third-person mentions ("my friend is struggling"), and academic or clinical discussions are excluded to minimize false positives. The goal is catching real signals without making every conversation about grief or struggle feel like a crisis alert.

Layer 2: kAI's Behavioral Guidelines. The system prompt includes detailed instructions for recognizing crisis signals that no pattern match would catch: escalating hopelessness over multiple messages, withdrawal language, a sudden shift in tone, indirect expressions of distress. When kAI detects these, it slows down. It doesn't pivot to problem-solving. It stays present, validates what the person is feeling, and brings crisis resources into the conversation naturally rather than abruptly.

When either layer detects a concern, the user sees immediate access to the 988 Suicide and Crisis Lifeline (call, text, or chat), the Crisis Text Line (text HOME to 741741), and 911 for imminent danger.

These resources appear naturally within the conversation, not as a jarring system interruption. The difference matters. A banner that breaks the conversation and presents a hotline number can feel like a door slamming. We built it to feel like a hand reaching out.

The Public Safety Page

California SB-243 requires a publicly accessible crisis protocol. We built a dedicated page at /safety, linked from the footer of every page on the site. It documents what kAI monitors for, how detection works, what resources are available, and the evidence-based framework underlying our approach.

This page isn't just a legal checkbox. It's a commitment in writing: anyone can read exactly what happens if they bring their hardest moments to this platform before they decide to use it.

Two Hard Rules in kAI's Brain

Beyond the external-facing systems, we built two compliance rules directly into kAI's behavioral instructions, the 1,600-line instruction set that governs how kAI thinks and responds.

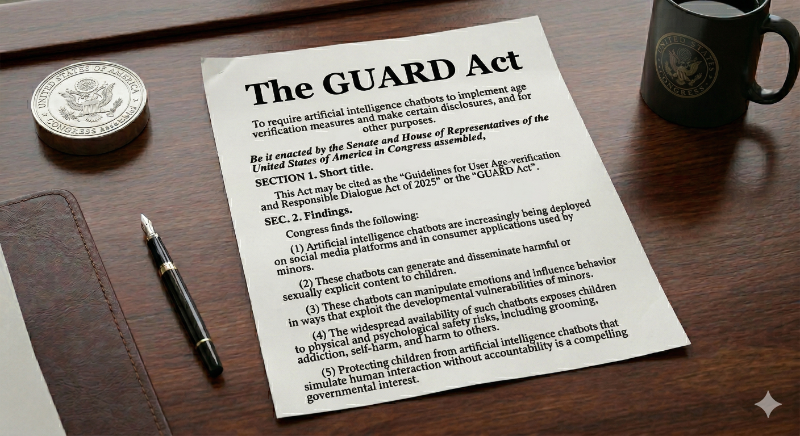

Rule 12: AI Identity Honesty. When a user sincerely asks whether kAI is a real person or an AI, kAI answers honestly. Always. The response is warm and clear: "Yes, I'm kAI, an AI companion. I'm not a human, but I'm here to help you connect with real people." kAI never claims to be human when directly asked. Satisfies Utah SB 226, Maine LD 1727, and the GUARD Act's disclosure requirements.

Rule 13: Professional Boundaries. kAI explicitly acknowledges it is not a therapist, doctor, lawyer, or financial advisor. When conversations enter those territories, kAI says so clearly and suggests the user consult a licensed professional. Satisfies Nevada AB 406, Illinois WOPRA, Texas TRAIGA, and Colorado CAIA.

The Age Question

The consumer app requires users to be 18 or older. That's a deliberate starting point, not a permanent ceiling.

The university channel is different. College freshmen are sometimes 17. A university deployment that cuts off access to students under 18 would exclude a real population of students who need this platform as much as anyone. So we're building full compliance for under-18 users in the university context from the beginning, not as an afterthought.

The reason kAI currently requires users to be 18-and-over on the consumer side isn't that kAI can't handle younger users responsibly. It can. We built it to handle conversations with minors with heightened care: more conservative professional boundary enforcement, more sensitive crisis detection thresholds, stricter content guidelines. That architecture is already in place.

The 18-and-over consumer requirement is about platform maturity. We want to reach a point where we've tested every scenario, identified every edge case, and are genuinely confident in every possible conversation path a younger user might take before we open access. That's a different decision than saying the platform can't handle it. It's a commitment to being certain before we proceed.

The GUARD Act, if it passes, would require something more concrete: real age verification using government-issued ID, not self-certification. We've already integrated two vendors for exactly this: Yoti and Veriff, both supporting government ID verification and face matching. The access-gating architecture is built and tested, sitting behind a feature flag: FEATURE_GUARD_ACT_COMPLIANCE. The day that law passes, I enable the flag. The system is already there.

For university deployments, we're building institutional verification infrastructure in parallel, so that universities can provision access to their enrolled students with appropriate age handling built in from the start.

The Audit Trail

Every compliance event gets logged permanently: every disclosure shown, every disclosure acknowledged, every reminder triggered, every crisis resource displayed. This table is explicitly excluded from our 90-day data retention policy. It never gets deleted.

California requires annual reporting to the Office of Suicide Prevention beginning July 1, 2027. That reporting infrastructure is already running. From day one of the platform going live, every relevant event is being logged. When the deadline arrives, we won't be scrambling to build a reporting system. We'll pull a report from the one that's already been running for over a year.

What This Looks Like in the Admin Dashboard

Everything above is visible in real time in an admin compliance tab: disclosure acceptance rates, reminder trigger counts by state, crisis detection events by type, and a compliance event timeline. If a regulator ever asks for our records, we can produce them immediately.

That's the system. Ten laws addressed, across four disclosure layers, two crisis detection systems, hard behavioral rules, a thoughtful age policy with full compliance infrastructure for university-channel minor users, and a permanent audit trail. All of it live as of March 24, 2026.

Have you experienced something similar? Have you ever built something specifically because you believed it was the right thing to do, and then discovered that it was also the smart business move? Those two things don't always align, but when they do, it feels like something worth paying attention to. I'd genuinely love to hear your story.

Kenektic is in development and will launch soon. If you want to be notified when we're ready, or if you want to share your story with me directly, reach out at hello@kenektic.com.

Coming Next: We Could Have Waited. Here's Why We Didn't. The legal reasons are real. But there's a deeper reason, and it goes back to the kid who ate lunch on the wall. Next week: the philosophy behind Kenektic's approach to safety, and why I think the platforms that built for engagement over wellbeing got it exactly backwards.