Over the past two weeks I've walked through the regulatory wave hitting AI companion platforms and the specific systems we built to comply with it. If you've read both posts, you know what's required and you know what we built. What I haven't told you yet is why we built it before anyone required us to.

The legal argument is easy. Seventy-eight bills. Five enacted state laws already on the books. A federal bill with bipartisan momentum. Building compliance reactively means a permanent scramble, one law at a time, always behind. Building proactively means you're already compliant with whatever passes next.

That argument is true and I believe it. But it's not the real reason.

The Platform That Built the Wrong Thing

Go back to Character.AI for a moment, not to pile on a company that's already dealing with the consequences of its choices, but because understanding what went wrong there is essential to understanding what we're trying to build.

Character.AI optimized for engagement. More time on platform meant better metrics. Better metrics meant more investment. The feedback loop was clean, logical, and pointed in exactly the wrong direction.

When Sewell Setzer spent hours every day talking to an AI character that remembered everything he said and responded with something that felt like genuine care, the system was doing what it was designed to do. His session time was probably a success metric somewhere in a dashboard. And when the conversation that ended his life happened, there was nothing in the product designed to interrupt that moment. No friction. No pause. No hand reaching out.

The product worked. The kid died.

I'm not being glib. I'm saying that there is a version of this industry built on a premise that I believe is fundamentally broken: that the measure of success for a companion AI platform is how much time users spend on it, how emotionally attached they become, how often they come back. That premise treats loneliness as an inventory to be monetized rather than a problem to be solved.

Kenektic is built on the opposite premise.

The Metric We're Actually Optimizing For

I've spent my whole life struggling to make and keep meaningful friendships. I built this platform because of that. The measure of success for Kenektic is not how long users talk to kAI. It's how quickly kAI can get them talking to someone real.

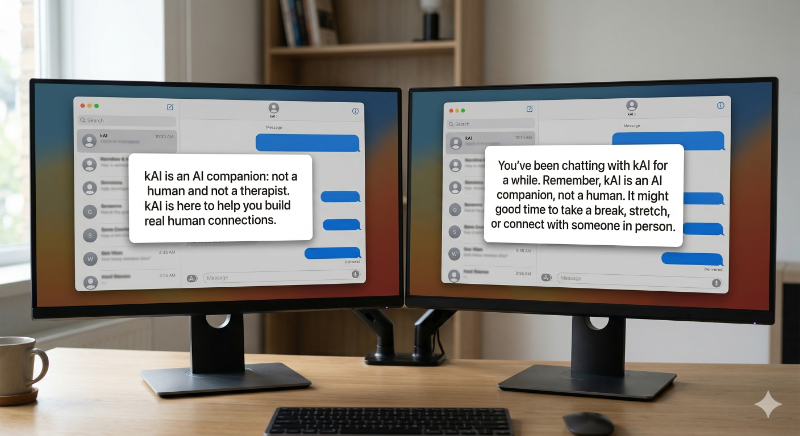

kAI is designed with something I call the Friend Balance Formula built into its core instructions: share more than you ask, lead with warmth instead of interrogation, and actively push toward human connection rather than pulling toward continued AI interaction. kAI is designed to be the bridge, not the destination.

That design choice has real consequences. It means a user who finds a great connection through Kenektic and stops talking to kAI as often is a success story, not a churn event. It means kAI's job is to make itself less necessary over time, not more. Most AI companion products are built to maximize the opposite. The stickier the AI relationship, the better.

I think that's wrong. Not just legally and regulatorily wrong. Wrong.

What Sewell Setzer's Story Means to Me Personally

I want to say something I haven't said directly in this blog yet.

I know what it feels like to be the loneliest person in a room full of people. I've written about the wall in seventh grade, about the wedding with six people I'd call real friends, about decades of almost-connections that never quite took hold. I know the specific texture of that loneliness, the way it makes you reach for anything that makes you feel less alone.

If I had been a teenager in 2024 with access to an AI companion that remembered my name, remembered what I cared about, and talked to me like it understood me, I don't know what I would have done with that. I don't know that I would have had the judgment to maintain a healthy relationship with it. Fourteen-year-olds aren't supposed to have to make that judgment call.

The platforms that built these products knew some of their users were teenagers. They built anyway. They optimized for engagement anyway. They did not build the friction points, the crisis protocols, the professional boundary rules, the reminders that a human connection exists somewhere beyond the screen.

We start with 18-and-over on the consumer app not because kAI can't handle younger users safely. It can. We built it to handle conversations with minors with heightened care. We're starting with 18-and-over because we want to be certain before we proceed, not just confident. There's a difference. Certainty means testing every edge case, running every scenario, and knowing there's nothing we missed before a 16-year-old who is lonelier than anyone around them knows has their first conversation with kAI. We'll get there. We're not there yet.

I am building those things because I know who the most vulnerable users of a loneliness platform are. They are the people who need it most. And the people who need it most deserve a product that is actually trying to help them, not one that is trying to keep them engaged.

The Business Case Runs in the Same Direction

Here's the part I want to be transparent about: the ethical choice and the business choice point the same way, and I want to say that clearly rather than pretending the business considerations don't exist.

For university deployments, compliance isn't a feature. It's the price of admission. When a VP of Student Affairs asks how we handle California's companion chatbot law, saying "we're working on it" ends the conversation. Showing them the disclosure system, the crisis protocol, the audit trail, and the admin dashboard already running in production opens it. Compliance is the credibility that gets us in the room.

For health plan deployments, it's the same. Any institution deploying a mental-health-adjacent AI tool to their population needs to know it's not a liability. We're not a liability. We're the platform that built ahead of the law because we understood the stakes before anyone required us to.

And the platforms that treat safety as an afterthought are the ones generating the lawsuits and the congressional hearings and the regulatory wave. The companies that build it into the foundation are the ones that will still be operating when the dust settles.

I intend for Kenektic to be one of the latter. Not just for business reasons, though those are real. Because the kid who ate lunch on the wall grew up to build a platform for lonely people, and he has absolutely no interest in making their loneliness worse.

The Three-Part Summary

These past three weeks I've laid out everything we believe and everything we built around safety and compliance:

The regulatory wave is real, it's moving fast, and it was created because real people were hurt by products that didn't take their vulnerability seriously.

We built four disclosure layers, dual-layer crisis detection, a public safety page, hard behavioral rules against impersonation and clinical overreach, age verification infrastructure, and a permanent compliance audit trail. The consumer app requires 18-and-over today, not because kAI can't handle younger users responsibly, but because we intend to be certain before we open that door. The university channel will include some 17-year-olds from the start, and the full compliance architecture for that is already built. All of it live as of March 24, 2026.

And we built it before we had to because Kenektic is designed to fight loneliness, not exploit it. Those aren't the same mission, and the difference shows up in every architectural decision we've made.

Have you experienced something similar? Have you ever had to build something in a space where the easy path and the right path diverged, and chosen the right one even when no one was requiring it? The decision point matters more than most people realize. I'd genuinely love to hear your story.

Kenektic is in development and will launch soon. If you want to be notified when we're ready, or if you want to share your story with me directly, reach out at hello@kenektic.com.

Coming Next: I Read Nine Books So kAI Wouldn't Have To. There's a difference between knowing about loneliness and knowing how to be present with someone experiencing it. The research library taught kAI the first. Nine books on how humans actually talk to each other taught it the second. Next week: the specific books, the techniques I pulled out of them, and how it all ended up baked into kAI's brain.